| Technical Reports | Work Products | Research Abstracts | Historical Collections |

![]()

|

Research

Abstracts - 2006

|

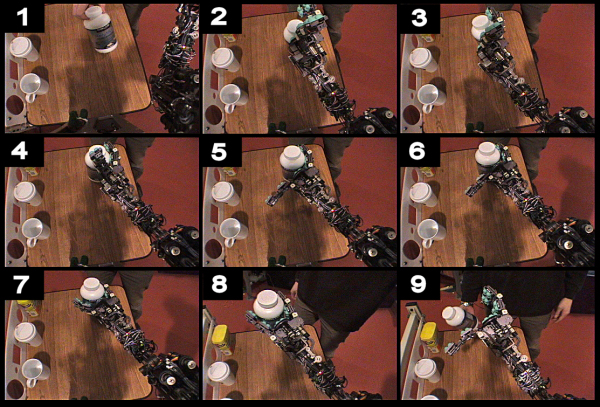

Sensitive ManipulationEduardo Torres-Jara & Lorenzo NataleAbstractWe are interested in studying how haptic feedback can be used in a humanoid robot to grasp unknown objects. Studies of humans have revealed the importance of somatosensory input (force and touch) during manipulation tasks [1]. Due to various technological limits, robotic manipulation has been relying more on the extensive use of vision rather than haptic feedback. For this reason, a part of our research has been devoted to address these limitations in the design and realization of our robotic platform. Obrero is a humanoid robot that consists of a 2 degree of freedom (DOF) head, a 6 DOF arm and a 6 DOF hand. The arm and the hand are equipped with series elastic actuators which provide force-feedback at each joint. Additional haptic feedback is given by 160 tactile sensors mounted on the fingers (more details about the platform can be found in [2] and [3]). The intrinsic compliance of the robot and the sensitivity of the tactile sensors allow the robot to safely explore and interact with the environment. This includes, for example, grasping unknown objects on a table in front of the robot (see Figure 1). Grasping and touching offer intimate access to objects and their properties. We are interested in investigating how an artificial system can exploit the interaction with the environment to learn about it. In previous work, we have shown how contact with objects can aid in the development of haptic and visual perception [4,5,6]. During grasping, the sensory system allows the robot to extract information about the object (for example its weight, shape or size). We plan to investigate how this wealth of information can be integrated with visual feedback to improve the robot's perceptual and manipulation capabilities.

Figure 1. Grasping sequence from the robot's point of view: an example. Frame 1: An object is waived in front of the robot to attract its attention. Frame 2: The robot detects the motion and moves the hand towards it. This is the only part of the sequence where visual information is actually used. At this point the robot starts exploring the space around the area where motion was detected, until the fingers and the palm touch the object (frames 3 to 6). Frames 7 to 8: the robot grasps the object and lifts it. Frame 9: the robot releases the object. References:[1] R.S. Johansson. How is grasping modified by somatosensory input ? In Motor Control: Concepts and Issues, Humphrey, D.R. and Freud, H.J. (Eds), pp. 331-355. John Wiley and Sons Ltd, Chichester, UK, 1991. [2] Eduardo Torres-Jara. Obrero: a platform for sensitive manipulation Humanoid Robots, 2005 5th IEEE-RAS International Conference, pp.327 - 332, Tsukuba, Japan, Dec. 5, 2005 [3] Eduardo Torres-Jara, Iuliu Vasilescu and Raul Coral. A Soft Touch: Compliant tactile sensors for sensitive manipulation. CSAIL Technical Report MIT-CSAIL-TR-2006-014 http://hdl.handle.net/1721.1/31220 Mar. 1, 2006 [4] P. Fitzpatrick, G. Metta, L. Natale, S. Rao and G. Sandini. Learning about objects through action - initial steps towards artificial cognition. In Proc. of the IEEE International Conf. on Robotics and Automation, Taipei, Taiwan, May 2003. [5] L. Natale, G. Metta and G. Sandini. Learning haptic representation of objects. In International Conf. on Intelligent Manipulation and Grasping, Genoa, Italy, July 2004. [6] E. Torres-Jara, L. Natale, P. Fitzpatrick, Tapping into Touch. In Fifth International Workshop on Epigenetic Robotics, Nara, Japan. July 22-24, 2005. |

||||

|