| Technical Reports | Work Products | Research Abstracts | Historical Collections |

![]()

|

Research

Abstracts - 2006

|

Space-Time Multiplexing of Stream ProgramsMichael Gordon & Saman AmarasingheWhatWe are developing compiler technology for StreamIt [3], a high-level stream programming language that aims for portability across communication-exposed machines. StreamIt contains basic constructs that expose the parallelism and communication of streaming applications, independent of the topology or granularity of the underlying architecture. There are two methods for compiling stream programs to a parallel architecture: time multiplexing and space multiplexing. Time multiplexing provides efficient load balancing by synchronizing operations through memory, while space multiplexing provides producer-consumer locality and scales with spatially-aware architectures. In this work, we are developing a hybrid backend that uses time multiplexing to switch between several space-multiplexed phases, thereby leveraging the benefits of both execution models. Our compiler targets the MIT Raw architecture [4], a tiled machine with fine-grained, programmable communication between processors. WhyAs we approach the billion-transistor era, a number of emerging architectures are addressing the wire delay problem by replicating the basic processing unit and exposing the communication between units to a software layer. These machines are especially well-suited for streaming applications that have regular communication patterns and widespread parallelism. However, today's communication-exposed architectures are lacking a portable programming model. If these machines are to be widely used, it is imperative that one be able to write a program once, in a high-level language, and rely on a compiler to produce an efficient executable on any of the candidate targets. HowGiven a stream graph, composed of filters (actors) and uni-directional FIFO channels connecting the filters, what is the best way to execute it? If we are targeting a uniprocessor, the most obvious technique is time multiplexing where we run one filter at a time on the processor. Load balancing is not an issue and the memory interface provides synchronization. Problems arise if we target a multiprocessor. Time multiplexing leads to long latency, lots of memory traffic (possibly with bad cache behavior), and the utilization depends on the presence of data-parallel filters that can be spread across the chip. A second approach is to run all the filters in parallel, each on its own tile. This is termed space multiplexing. Our first StreamIt compiler for Raw uses this technique [1]. Space multiplexing affords (i) no filter-swapping overhead, (ii) reduced memory traffic, (iii) localized communication, and (iv) tighter latencies. Due to these properties, space multiplexing scales extremely well, although it is subject to effective load balancing techniques. Toward that end, merging and splitting components of the stream graph until it is composed of n load balanced filters, where n <= the number of processing elements, proved difficult, as the optimization technique is constrained by several competing factors. Further, the use of space multiplexing alone forced us to support arbitrarily complex communication patterns that did not map efficiently to the 2-D mesh in Raw. The technique employed by our new backend for Raw is to merge the advantages of space multiplexing and time multiplexing. This hybrid approach will time multiplex different groups of filters and space multiplex the filters within a group. We call each group a space-time slice or just a slice. It is the responsibility of the compiler to schedule the slices, calculating both an ordering of slices and a placement for the filters of the slices. We leverage techniques traditionally applied to schedule machine instructions in loop nests. Currently, we employ software pipelining techniques to slacken dependencies between slices and allow for more flexibility scheduling slices during steady-state execution. We also recognize parts of the stream graph that can be space-multiplexed in a fine-grained, systolic fashion. Using our linear analysis framework [2], we can discover the linear sections of a streaming application. We define a linear section of a stream graph as a group of filters that compute a linear combination of their inputs. Many ubiquitous DSP kernels are linear including FIR, low and high-pass filters, and DFT. These linear computations can be represented as a matrix multiplication and efficiently mapped to Raw. Linear slices of the stream graph are space-multiplexed across tiles of the chip using parameterized computation and communication code. The matrix representations of the linear filters are broken into pieces that the compiler targets for aggressive code generation. This results in optimal usage of the tiles for each piece.

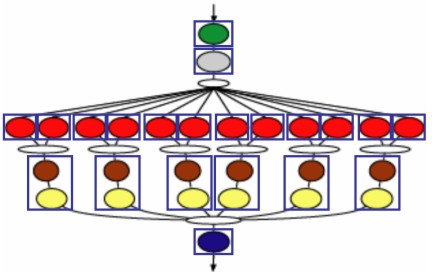

We will now present a small example to solidify the reader's understanding of our work. In the above figure we show the slice graph for our FMRadio benchmark, an implementation of a software FMRadio with a 7-way equalizer. The exact implementation is irrelevant to our discussion. In the Figure, the colored ovals denote filters and the blue boxes around filters denote the slices of the graph. In this particular application the 12 red filters and the green filter each perform an equal workload and together account for over 95% of the workload of the steady-state as calculated by our static workload estimation algorithm.

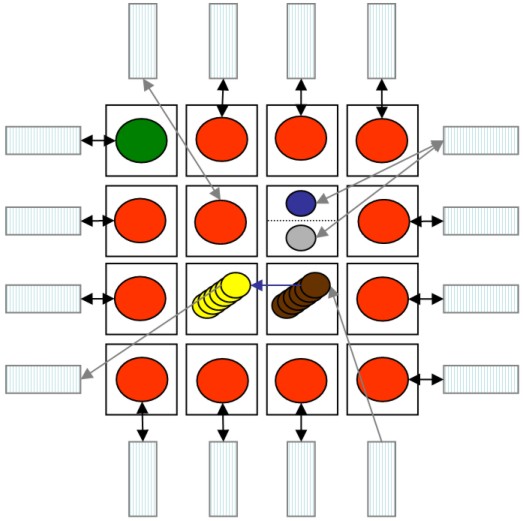

An example steady-state execution of the FMRadio application on a 16 tile Raw configuration is shown above. In the figure we see the 16 raw tiles represented as a 4x4 array of squares. The off-chip DRAM modules are shown as rectangles attached to the border tiles. In the mapping, the 12 slices composed of a red filter and the one slice composed of the green filter each occupy their own tile. The blue filter and the gray filter share a tile. The 6 slices composed of a brown filter and a yellow filter occupy 2 tiles. In the figure, the black arrows denote communication to and from off-chip memory over Raw's static network, the gray arrows denote communication to and from off-chip memory using Raw's dynamic network, and the blue arrows denote intra-slice communication between filters of a single slice utilizing Raw's static network. Since the steady-state is software pipelined and adequately buffered, we do not directly communicate data between slices during its execution. Before each steady-state we perform a data-reorganization stage that shuttles data between DRAMs to prepare for the next steady-state iteration. In this example, the data-reorganization stage performs the data splitting occurring after the gray filter, the joining before each brown filter, and the joining before the blue filter. In this mapping, each bottleneck filter (red or green) occupies its own tile and is busy computing nearly the entire steady-state for very high computational utilization. These bottleneck tiles are not burdened with other work not on the critical path. This mapping is completely automated and achieves a 2-fold increase in terms of throughput versus a space-multiplexed mapping of the FMRadio application. ProgressCurrently, we have completed a second version of our space-time multiplexing Raw backend for StreamIt and are in the process of evaluating its performance and scalability. We have a robust implementation of inter-slice communication using Raw's off-chip streaming memory interface. For intra-slice communication, we exploit Raw's static network and translate StreamIt's filter code into C code to be executed on the compute processors. For many common cases, we have completed parameterized code generation for linear filters that generates assembly directly.

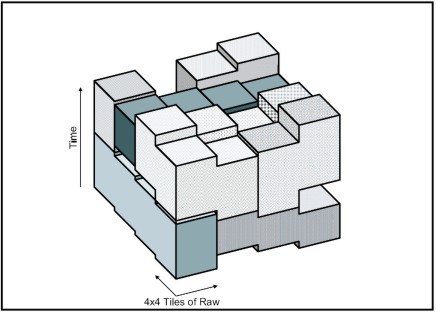

As for the high-level algorithms, we have implemented a greedy algorithm to partition the stream graph into slices. We translate the slice scheduling problem into a form that approximates a well-studied optimization problem. We translate our problem into a 3-dimensional bin-packing problem with irregularly shaped, transformable objects. In our translation, each slice is represented by a shape, and each Raw tile is a represented as a bin. See the figure above for a sample 3-dimensional bin-packing of slice objects. The shape of each object is dependent upon the properties of the filters of the slice it represents and the filter's assignment to bins (tiles). The height of a slice object is calculated from a static estimation of the slice's work load as it varies with each filter of the slice. In the figure above, note the step shape of each slice due to the pipelining of the filters that compose the slice. We use simulated annealing to arrive at an optimized solution to the bin-packing and thus a schedule. Although in previous work simulated annealing has been applied to only regular 3-dimensional bin-packing (i.e., non-transformable, rectilinear objects), our initial results are very encouraging. FutureIn the near future we would like to further investigate our partitioning and scheduling algorithms. Additionally, we will further expand space multiplexing as we investigate additional parallelism schemes such as filter fission (breaking a filter into multiple, smaller filters), state-space filter representations, and optimized linear code generation for sparse matrices. We are also working on a new intermediate representation for our compiler that will allow us to directly generate assembly code for Raw's compute processors. This will overcome some of the inefficiencies in generating stream code via a procedural framework. Research SupportThis work is supported in part by a grant from DARPA (PCA F29601-04-2-0166), awards from NSF (CISE EIA-0071841, ITR ACI-0325297, NGS CNS-0305453) and the MIT-Oxygen Project. References:[1] Michael Gordon, William Thies, Michal Karczmarek, Jasper Lin, Ali S. Meli, Andrew A. Lamb, Chris Leger, Jeremy Wong, Henry Hoffmann, David Maze, and Saman Amarasinghe. A Stream Compiler for Communication-Exposed Architectures. In ASPLOS, 2002. [2] Andrew A. Lamb, William Thies, and Saman Amarasinghe. Linear Analysis and Optimization of Stream Programs. In ACM Conference on Programming Language Design and Implementation, 2003. [3] William Thies, Michal Karczmarek, and Saman Amarasinghe. StreamIt: A Language for Streaming Applications. In Proc. of the International Conference of Compiler Construction, Grenoble, France, 2002. [4] Elliot Waingold, Michael Taylor, Devabhaktuni Srikrishna, Vivek Sarkar, Walter Lee, Victor Lee, Jang Kim, Matthew Frank, Peter Finch, Rajeev Barua, Jonathan Babb, Saman Amarasinghe, and Anant Agarwal. Baring It All To Software: Raw Machines. IEEE Computer, 30(9), 1997. |

||||

|