| Research Abstracts Home | CSAIL Digital Archive | Research Activities | CSAIL Home |

![]()

|

Research

Abstracts - 2007

|

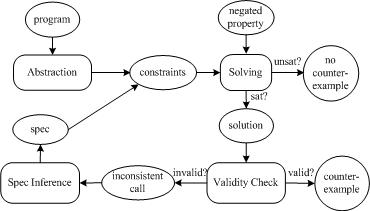

Automating Modular Program Verification by Inferring SpecificationsMana TaghdiriIntroductionTraditional techniques for reasoning about the correctness of a program made extensive use of modularization. Each procedure would be checked against its specification, using the specifications of its called procedures as surrogates for their code. Automating such approaches has motivated several tools. Many of them (e.g. ESC/Java, Hob, Jahob), however, automate only the checking phase, assuming that the specifications of all called procedures are provided by users. This assumption places a considerable burden on the users and thus limits the applicability of such tools. To avoid this problem, some tools (e.g. Jalloy and Magic) inline all procedure calls whose specifications are not available. However, such inlining does not scale. The goal of our project is to develop a modular static technique to check data structure properties of programs without requiring any user-provided annotations except the top-level property. ApproachWe have developed a new modular technique for checking a given procedure against a property [1]. The user need only provide the property to check; no further annotations are required. The specifications of all called procedures will be inferred automatically from the code. Of course, extracting a specification that summarizes in full the observable behavior of a procedure is not feasible. For this application, however, the inferred specifications need capture only as much information about the procedures as is required to establish the correctness of the main procedure being checked. Specifications are inferred iteratively using the "counterexample-guided abstraction refinement" framework [2] as shown in the Figure below. For each procedure call, an initial specification is computed that over-approximates the effects of that procedure. These specifications are then refined as needed in response to spurious counterexamples. The analysis is performed on finitized procedures and is exhaustive with respect to a bounded heap. When the analysis terminates, any remaining counterexample is guaranteed not to be spurious. Absence of a counterexample ensures that the property holds within the analyzed bounds.

The initial abstraction of call sites can be arbitrarily approximate. For example, empty specifications that allow call sites to arbitrarily mutate the state of a program can be used as initial specifications. However, starting from richer initial specifications can reduce the number of refinements, and thus, improve the performance. The main challenge is to compute specifications that are safe, meaning that they account for all possible executions of the procedure being analyzed, and succinct, and yet give useful information about the underlying code (that is, they are not too approximate). We have developed a technique [3] that abstracts the behavior of a procedure by computing bounds on the final values of each field and variable. This is done by an application of the abstract interpretation framework in which a procedure is evaluated symbolically, with each field and variable initially holding a value represented by a fresh constant. Each statement updates an environment mapping fields and variables to values represented as relational expressions. After an if-statement, the branches are merged by forming a union expression. A loop is handled by forming a transitive closure. The analysis maintains both upper and lower bounds on the values of variables and fields, so that in some cases an exact result can be obtained. ResultsWe have implemented our technique in a tool called Karun which translates both the abstract program and the given property to a boolean formula using Forge, and thereby indirectly using Kodkod and the Zchaff SAT solver. New specifications are inferred from a proof of unsatisfiability generated by Zchaff. Using Karun, we were able to check some properties of a graph package that uses several data structures stored in the heap. We also used Karun to check an open-source job scheduling library and discovered two previously unknown bugs in that code. AcknowledgementThis work is supported in part by the National Science Foundation (ITR Program, Award 0541183). References:[1] Mana Taghdiri and Daniel Jackson. Inferring Specifications to Detect Errors in Code. In Automated Software Engineering Journal , Volume 14, Issue 1. [2] E. Clarke and O. Grumberg and S. Jha and Y. Lu and H. Veith. Counterexample-guided Abstraction Refinement. In Proc. of Computer Aided Verification, pp 154--169, 2000. [3] Mana Taghdiri, Robert Seater, and Daniel Jackson. Lightweight Extraction of Syntactic Specifications. In Proc. of Foundations of Software Engineering, November 2006, Portland, Oregon. |

||||

|